Crawling is how search engines like Google explore the internet. Have you ever wondered how Google finds your website? It’s like having a friendly robot that reads all the pages on the internet. These robots help search engines find and organize web pages so people can search for them easily.

What is Crawling in SEO?

Before diving into crawling, it might help to understand the basics of SEO. Check out our article on “What is SEO?” to get started.

Crawling is when search engines like Google send out special internet robots (we call them “bots” or “spiders”) to read websites. Think of these bots as curious readers who go from page to page on your website, looking at everything you’ve written.

These bots are like librarians. Just as librarians need to know about all the books in a library, search engine bots need to know about all the pages on your website. They do this by following links from one page to another, just like you might click links when browsing the internet.

It’s important to know that crawling is different from how Google ranks your website. Crawling is just about finding and reading your pages. It’s the first step in getting your website to show up in Google searches.

Here’s how crawling is different from other steps:

- Crawling: Bots explore the website.

- Indexing: The website gets added to the search engine’s library.

- Ranking: Search engines decide which pages show up first when you search.

How Crawling Works

Crawling starts with a list of websites. Bots look at these websites and follow links to find more pages. Let’s break down how crawling happens:

- First, the search engine bot visits your website’s main page or home page

- Then, it looks for links to other pages on your site

- The bot follows these links to find more pages

- It saves information about what it finds on each page

To help these bots, websites have two special tools:

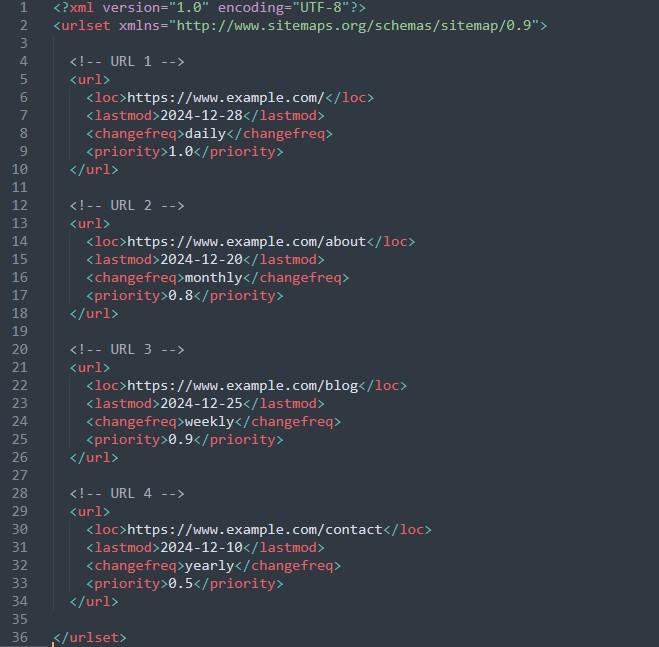

- An XML sitemap file: Think of this like a map that shows all the important pages on your website

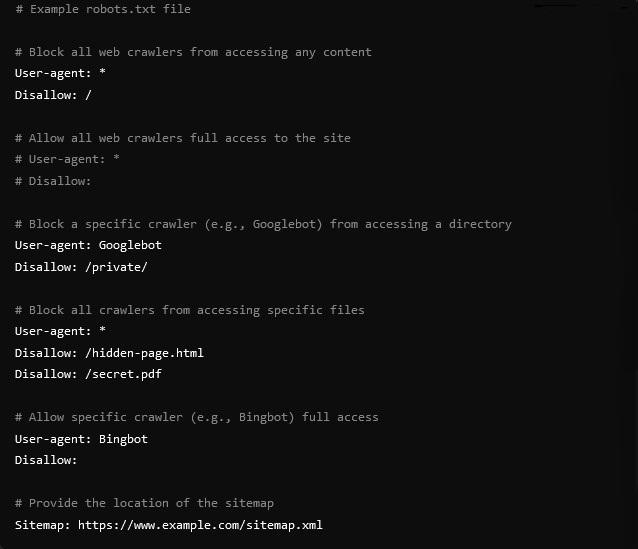

- A robots.txt file: This is like leaving instructions for the bots about which parts of your website they can and can’t visit

Bots have a ‘Crawl Budget,’ which limits the number of pages they can visit at once. Websites should make it easy for bots to find important pages within that budget.

Why Crawling is Important

Crawling matters because if search engines can’t read your website, they can’t show it to people in search results. It’s like having a great store that nobody can find because it’s not on any map!

When search engines crawl your site, they can:

- Find your newest content quickly.

- See when you change or update old pages.

- Make sure all your pages can be found. If they can’t crawl a page, it won’t show up in search results.

- Check how helpful your site is, which can help it rank higher.

Crawling is just the first step. You also need to make your site easy to read and useful for it to rank well.

Making Your Website Easy to Crawl

Here are some simple ways to help search engines crawl your website better:

- Add an XML Sitemap: Create a clear sitemap to guide bots to all your pages.

- Check Your Robots.txt File: Make sure important pages aren’t blocked.

- Link pages together: Make sure all your pages link to each other in a way that makes sense.

- Speed Up Your Website: Slow sites are harder for bots to crawl.

- Fix Broken Links: Broken links stop bots from exploring your site properly.

- Create Easy-to-Read URLs: Create clear website addresses (URLs) that people can understand

- Avoid Duplicate Content: Bots might get confused if you have the same content on multiple pages.

What You Should Do Next

Now that you know about crawling, here are some things you can do:

- Check if Google can find all your important pages

- Make sure your website loads quickly

- Fix any broken links on your site

- Create an XML sitemap if you don’t have one

Keep in mind, that helping search engines crawl your website is the first step to showing up in search results. Take time to check your website regularly and fix any problems you find.

Common Questions

Q: How do I know if Google is crawling my site?

A: You can use Google Search Console, a free tool that shows you how Google sees your website.

Q: How often will search engines crawl my site?

A: It depends on how often you update your site and how important search engines think it is. Popular sites get crawled more often.

Q: What if I find crawling errors?

A: Don’t worry! Most crawling errors are easy to fix. Start by checking for broken links and making sure your sitemap is up to date.

Remember, crawling is what helps search engines find and understand your website. Without it, your site won’t show up in search results. To make crawling simple and fast, keep your website organized, fix errors, and follow best practices. This will help your site rank higher and attract more visitors.

Also, take a look at our article on What is SEO to understand the basics of search engine optimization.